Career Center Impact Measurement: Why Nobody Has It Figured Out Yet

Visual by Campaign Studio · Generate your own for free

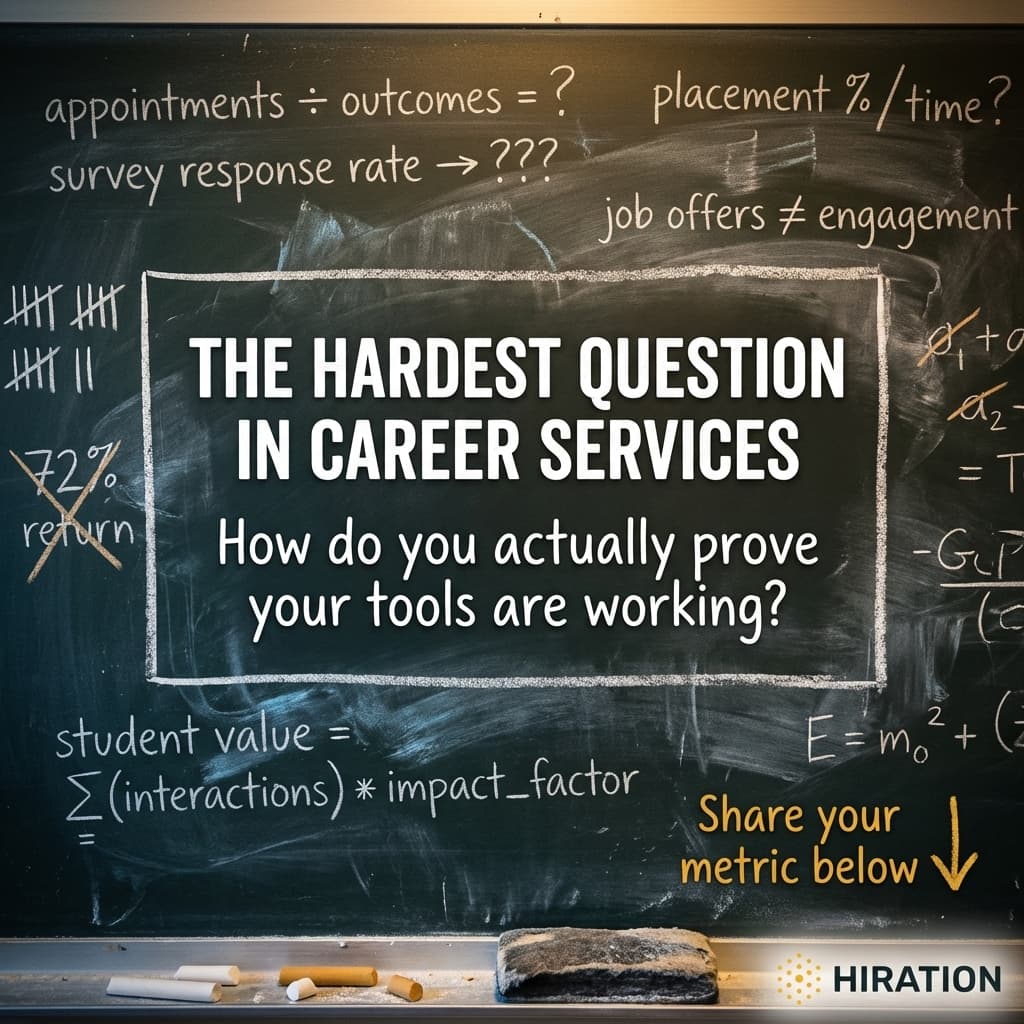

Most career centers are measuring the wrong things. Not because they're careless, but because the metrics that are easy to collect have almost nothing to do with the outcomes that actually matter.

A director at a regional university told us recently that their provost wanted one number to justify renewing their AI career tool subscription. One number. The director had platform login counts, resume review completions, student satisfaction ratings, and appointment volume. All trending up. None of it landed. The provost wanted to know if students were getting better jobs faster. That's the gap almost every career center is stuck in right now.

Usage Data Feels Good But Proves Little

When a career center deploys an AI tool, whether it's a resume reviewer, an interview coach, or a job-matching platform, the first instinct is to track adoption. How many students logged in? How many completed an interaction? These numbers are easy to pull and easy to present in a slide deck.

But here's the problem. A student who logs into an AI resume tool three times might have gotten a perfect resume on the first pass. Or they might have rage-quit twice before finishing on the third attempt. The number "3 logins" tells you nothing about what happened. Career centers at mid-size institutions often report 60-70% platform adoption rates and still can't demonstrate improved outcomes. Adoption is a prerequisite for impact. It isn't impact.

The counter-argument is fair: you can't have outcomes without engagement, so tracking engagement is a reasonable proxy. True. But proxies become dangerous when decision-makers start treating them as the real thing. A VP of student affairs who sees "4,200 AI interactions this semester" might nod approvingly. Or they might ask what those interactions actually changed. You want to be ready for the second question.

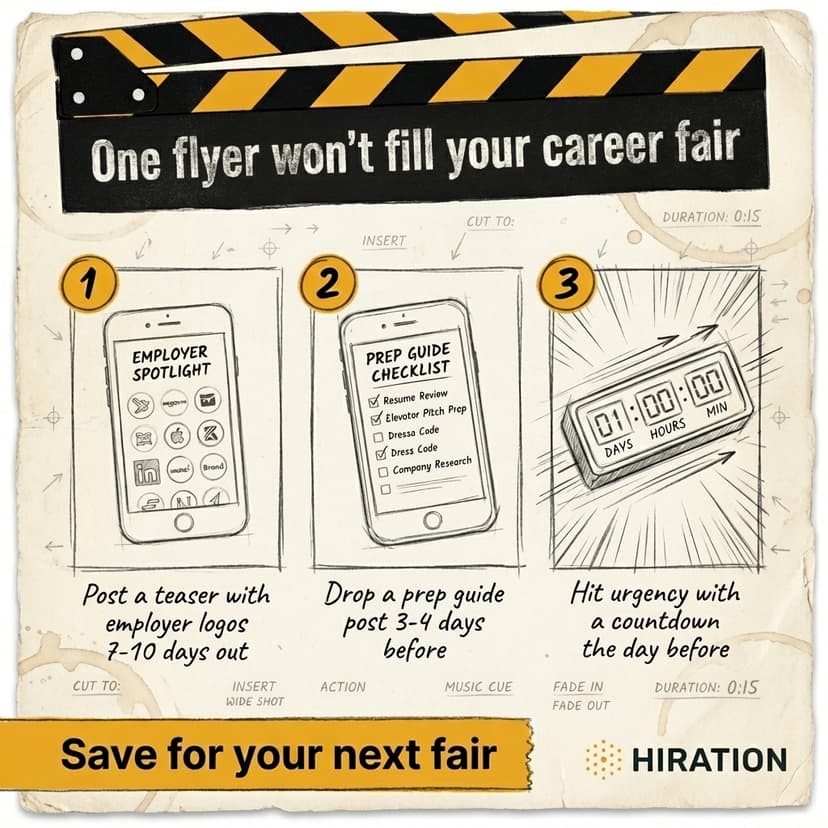

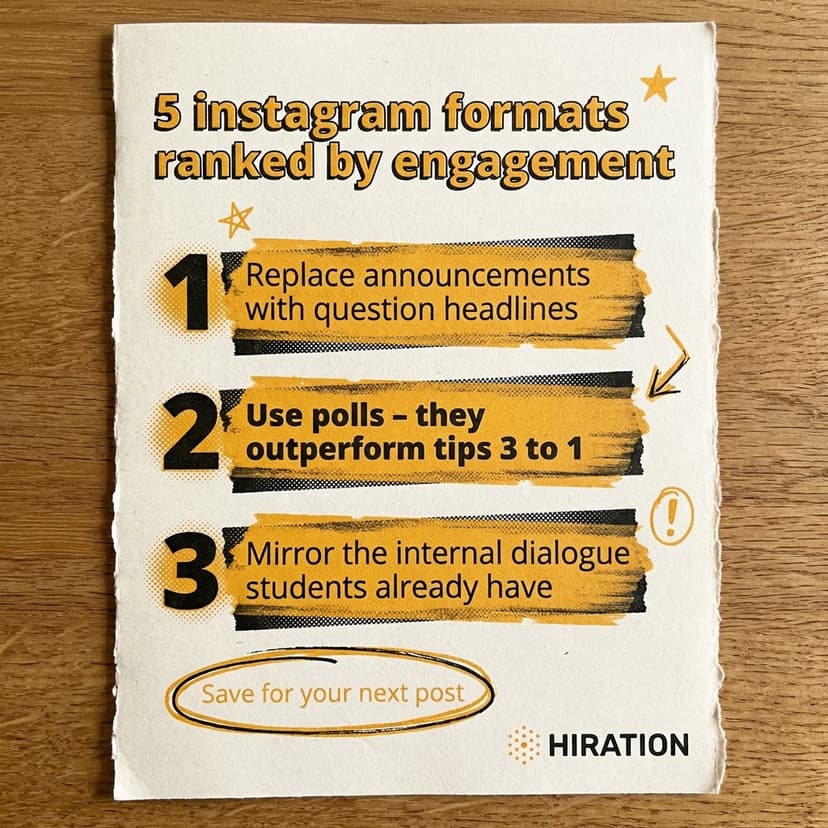

If your career services team runs social campaigns, Campaign Studio can save you a few hours each week.

See how it worksFirst-Destination Data Is the Gold Standard Nobody Can Afford to Wait For

First-destination surveys, tracking where graduates land within six months, remain the closest thing to a true outcome measure. NACE knowledge rates vary wildly by institution, with some schools capturing data on 65% of graduates while others struggle to break 30%. And even strong response rates come with a timing problem. You won't know this year's outcomes until next year. That makes first-destination data nearly useless for mid-cycle decisions about technology renewals.

So what fills the gap? The career centers we see making the strongest case for their tools are stitching together what we'd call a "trajectory story." They connect three data points in sequence. First, the student engaged with the AI tool (usage). Second, the student took a measurable next step, like applying to a position, scheduling a counselor meeting, or attending an employer event (behavior change). Third, the student reported a positive outcome on the first-destination survey or in a follow-up check-in (result).

No single metric captures this. The trajectory does. And building it requires intentional data architecture, connecting your career platform data to your CRM to your survey responses. That's hard. A 3-person career center serving 5,000 students doesn't have a data engineer on staff. But even rough versions of this approach outperform a spreadsheet of login counts.

The Metric That Actually Moves Budgets

Want to know what we've seen work in real budget conversations? Conversion rates. Specifically, the percentage of students who move from one stage to the next in their career readiness journey. What share of students who used the AI resume tool went on to apply for jobs through the platform? What share of those who completed a mock interview attended a career fair within 30 days?

Conversion rates are compelling because they imply causation without requiring a randomized controlled trial. They show momentum. And they're comparative. You can benchmark this semester against last semester, or compare students who used the tool against those who didn't.

One institution we work with found that students who completed at least two AI-assisted resume reviews were 40% more likely to attend an employer networking event. Is that proof the AI caused the behavior? Technically, no. Motivated students might do both regardless. But it's a far more persuasive data point than "2,300 students used the platform."

One Thing to Do This Week

Pull your AI tool's usage data and your career fair or employer event attendance list from last semester. Cross-reference them. Even a basic comparison between tool users and non-users gives you something most career centers don't have: a before-and-after story connecting technology engagement to student action. That's the foundation every stronger metric builds on.

Frequently Asked Questions

How do career centers measure the impact of AI tools on student outcomes?

The most effective approach connects three data points in sequence: AI tool usage, a subsequent student behavior change (like applying for a job or attending a career event), and an eventual outcome from first-destination surveys. Single metrics like login counts or satisfaction scores rarely tell a convincing story on their own.

What is the best metric to justify career center technology spending?

Conversion rates between stages of student engagement tend to be the most persuasive in budget conversations. For example, the percentage of students who used an AI resume tool and then applied to jobs through the platform. These rates imply momentum and allow semester-over-semester comparison.

Why aren't first-destination surveys enough to measure career center success?

First-destination surveys have a significant timing gap, as results typically aren't available until six months or more after graduation. Response rates also vary widely, with some institutions capturing only 30% of graduates. This makes them unreliable for mid-year technology renewal decisions.

How can small career center teams track meaningful outcomes with limited staff?

Start by cross-referencing existing data sources you already have, such as platform usage logs and career event attendance lists. Even a basic comparison of tool users versus non-users provides a before-and-after narrative. You don't need a data engineer to build a rough version of a trajectory story.

Your Career Services team is already doing this work manually

Campaign Studio turns one idea into a full campaign across Instagram, LinkedIn, email, Handshake, and LMS. Weekly engagement runs on autopilot. Event campaigns build on demand.

Run Your First Campaign